Rethinking Medical LLM Hallucinations: A System-Level Survey

Published in MetaArXiv, 2026

A systems-level survey arguing that hallucination in medical LLMs is a structural property of probabilistic generation, not a fixable bug. Synthesizes 50+ papers on detection, mitigation, and benchmarking through a risk management lens.

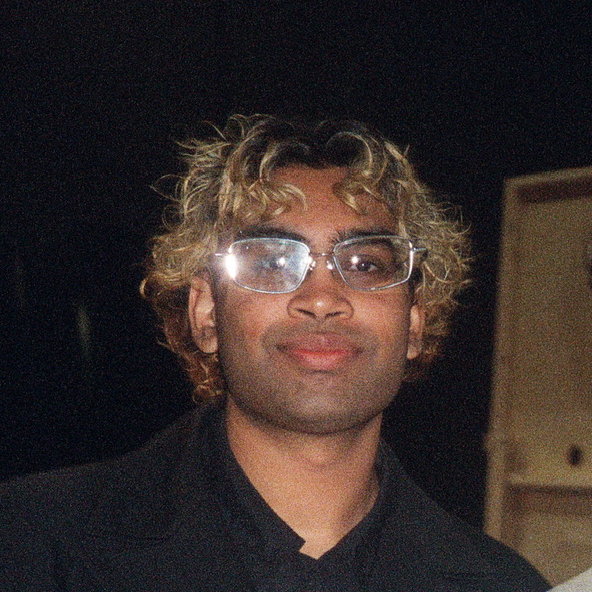

Recommended citation: Matthews, A., Vankadaru, V., Roosta, T., & Passban, P. (2026). Rethinking Medical LLM Hallucinations: A System-Level Survey. MetaArXiv.

Download Paper