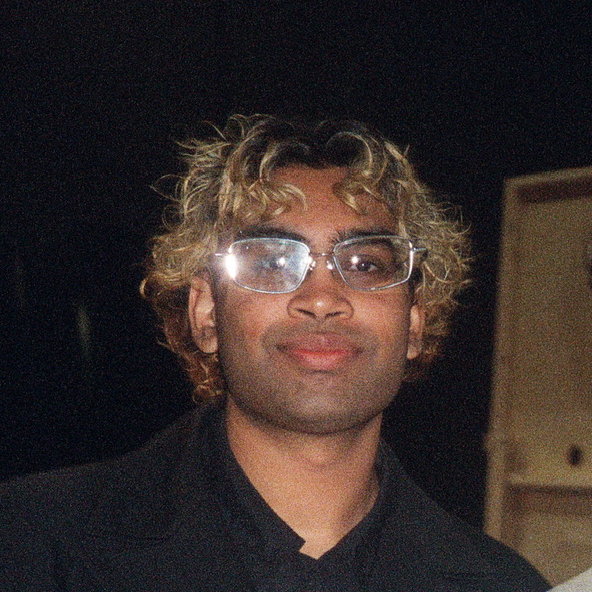

Vijay Vankadaru

Machine learning is, at its core, a theory of learning itself — and I find that genuinely exciting. The idea that you can formalize what it means to generalize, to represent, to reason, and then actually build systems that do those things, is one of the more remarkable things happening in science right now.

My work focuses on the harder cases: multimodal signals, temporal dependencies, high-stakes deployment. I want to understand not just whether a model works, but why it works and when it breaks. That question — when does ML actually work, and what breaks it — runs through everything I do.

Most of my work has involved temporal and multimodal learning: designing architectures that reason across audio, vision, and text at different timescales. I’ve validated a lot of this in clinical contexts at DASION, where I’ve been building ML systems since 2021 in collaboration with Prof. Weiqing Gu at Harvey Mudd, with $2.5M in NSF Phase I and II funding behind the work.

At Berkeley (MIDS, 4.0 GPA), I’m a Graduate Research Assistant with Prof. Cornelia Paulik working on retrieval-augmented generation for pediatric medical QA, and with Prof. Tanya Roosta, which led to a co-authored survey on hallucination in medical LLMs published on MetaArXiv. I’m also developing follow-on work on hallucination detection and mitigation targeting a top-tier venue.

The math that underlies this — representation theory, optimization landscapes, attention as soft routing, the geometry of embedding spaces — is what keeps me up at night in the good way. And the stakes matter too: getting ML to actually work in healthcare, to be reliable enough to inform clinical decisions, requires a level of rigor that pushes the methods themselves forward.

Research

Multimodal learning and temporal alignment Building models that fuse audio and text representations across time. Current work: a multimodal multi-instance learning framework for depression detection combining Wav2Vec 2.0 audio with MT5/RoBERTa text via CTC temporal alignment, targeting NeurIPS 2026.

LLM reliability and hallucination Understanding why language models fail in structured domains and how to detect and mitigate those failures systematically. Co-authored a systems-level survey on hallucination in medical LLMs (MetaArXiv, 2026). Ongoing work developing novel mitigation methods with Prof. Tanya Roosta.

Retrieval systems for knowledge-intensive tasks Building RAG pipelines for domains where retrieval quality directly affects downstream safety. Current work: PedRAG, a dual-retrieval architecture for pediatric medical QA, targeting ICML 2026.

News

- Mar 2026 — “Rethinking Medical LLM Hallucinations: A System-Level Survey” published on MetaArXiv. Co-authored with Asha Matthews, Prof. Tanya Roosta, and Prof. Peyman Passban at UC Berkeley.

- Jan 2026 — Started Graduate Research Assistantship with Prof. Tanya Roosta at UC Berkeley.

- Aug 2025 — Started Graduate Research Assistantship with Prof. Cornelia Paulik at UC Berkeley.